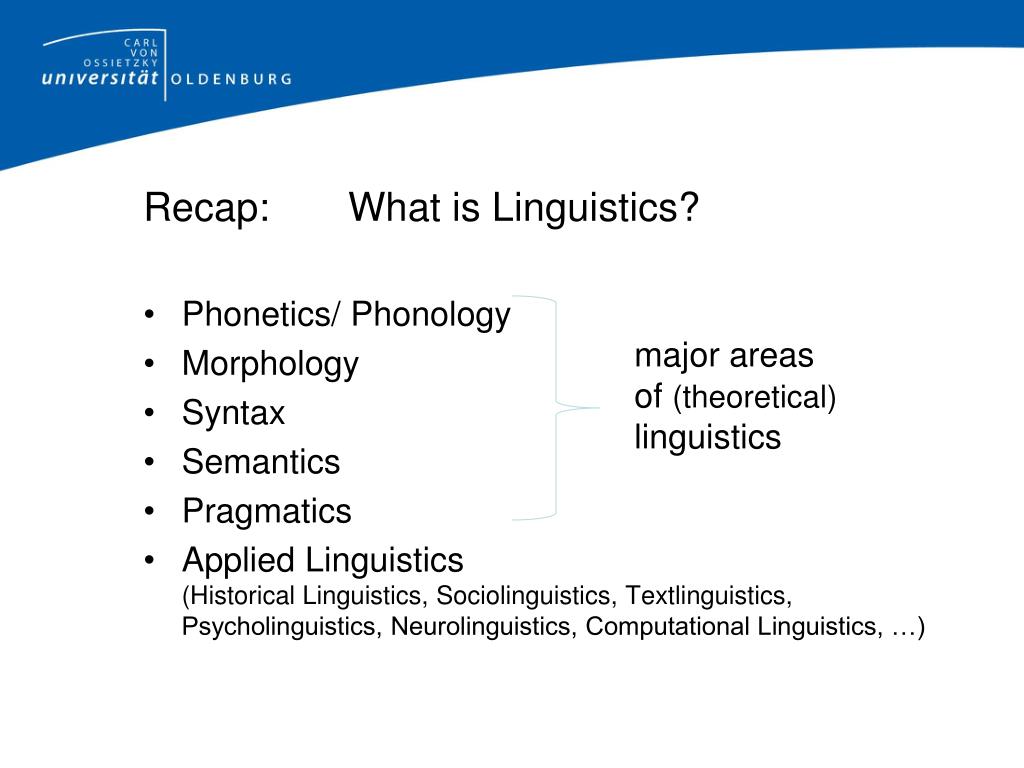

Useful addition to the studies on prescriptivism in grammars and usage handbooks. dictionaries have been created over the entire history of lexicography in various cultures, regions and countries 9, p. Keywords: lexicography, typological classification. "This linguistic choice reflects a subtle yet profound shift in perception: the AI, not the user, is the one 'hallucinating'. Mugglestone examines how descriptive and prescriptive aspects can coexist in a single work with regard to lexicography, investigating in particular the Oxford English Dictionary. with the concept and the object of description, as well as, the linguistic characteristics of the term as the element of the language for specific purposes. The dictionaries steer a course between semantic definition and comprehensive knowledge, combining the best of both approaches. In 3,250 entries this dictionary spans grammar, phonetics, semantics, languages (spoken and written), dialects, and sociolinguistics. WSK Online is an Online Database covering all the major areas of linguistics and communication science. Glossary of Linguistic Terms 631 alveolar A consonant produced by the tip of the tongue against the ridge behind the upper teeth: e.g., /t, d, s, z, n, l/. "'Hallucinate' is an evocative verb implying an agent experiencing a disconnect from reality," he continued. The third edition of The Concise Oxford Dictionary of Linguistics is an authoritative and invaluable reference source covering every aspect of the wide-ranging field of linguistics.

Adding that AI tools using large language models (LLMs) "can only be as reliable as their training data", she concluded: "Human expertise is arguably more important - and sought after - than ever, to create the authoritative and up-to-date information that LLMs can be trained on."ĪI can hallucinate in a confident and believable manner - which has already had real-world impacts.Ī US law firm cited fictitious cases in court after using ChatGPT for legal research while Google's promotional video for its AI chatbot Bard made a factual error about the James Webb Space Telescope.ĭr Henry Shevlin, an AI ethicist at Cambridge University, said: "The widespread use of the term 'hallucinate' to refer to mistakes by systems like ChatGPT provides a fascinating snapshot of how we're anthropomorphising AI." This high conceptual complexity explains why dictionaries for translation have been the subject of a great number of lexicographic studies both in theory and practice, including studies of access.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed